OK, Boomerors… the ‘90s and ‘00s new world of technology and globalization

Throughout this history, we’ve followed the Boomers from their birth after World War II to their teenage years in the ‘50s/’60s to their 20-something malaise in the ‘70s and, most recently, their “settling-down” into middle-age in the ‘80s. Though young people aged 18-21 gained the right to vote with the 26th Amendment in the early ‘70s, it is only at this point—in the 1990s—that a member of the “Baby Boom” would be eligible for the “emperor” position. (The Constitution set an age minimum of 35 years for chief executive, but usually a decade more of life experience is needed to be a serious contender.) The first year of the “Baby Boom,” 1946, produced three presidents born within three months of one another (Clinton, Bush II, and Trump). The last year of the Boom, 1964, produced the wife of another president shortly after the turn of the century (M. Obama).

Boomers have retained their status as the largest American generation of the 20th—and now, the 21st—century. As Boomers retire and live longer, healthier lives than their preceding generations, millennials (those who came of age for the turn of the millennium in 2000) and younger generations will have their work cut out for them to support their elders.

This next round of Commanders-in-Chief also stands out as being the first “emperors” born after the Second World War—commanding the U.S. Army without serving in the national military themselves. (Clinton was studying in England on scholarship during the peak of the Vietnam War, and Bush II served as a pilot in the Texas Air National Guard, though it’s debatable to what degree—if any—he received preferential treatment that kept him out of the war overseas.)

As with WWII’s end, the sudden end of the Cold War turned old allies into enemies, as Third World countries fought over by the Soviets and the Americans—especially in the Korean peninsula and the Middle East—became dangerous threats to American national security and peace-of-mind now that the Soviet Union had crumbled. International terrorism, not sponsored or supported by individual nations but by extranational terror cells, replaced the “Communist threat” as the 20th century petered out. As the “Bush (II) Doctrine” in the early 2000s stated, “containment” was not sufficient to combat this globalized presence that could slip through international borders.

Globalization increased exponentially as, just like the interstate highway system in the early Cold War, a Defense Department initiative developed at the very tail-end of the Cold War changed the country and the entire world—in this case, an “Internet” capable of maintaining communications even in the unlikely event of a worldwide nuclear strike. With the advent of cell phones in the ‘90s and their marked improvement in the ‘00s, a global communications system was literally at people’s fingertips.

The dawn—and the yawn—of the millennial age and contemporary political polarization

Though his track record was certainly mixed as president, Jimmy Carter appealed to voters in 1980 because he represented a down-home outsider to Washington politics—and it was just this brand of Southern appeal that the next two “emperors” relied on for their two-term occupations of the Commander-in-Chief seat (Clinton from 1993-2001; Bush II from 2001-2009). Bill Clinton relied on his image as scholarship student and the product of a single-parent home to appeal to middle-class and working-class Americans. Even though Bush II had the same pedigree, obviously, as Bush I, his public image was as a man of the people, more as a Texas rancher—in the mold of Reagan—than a as product of New England, as his father was.

Yet, for all their appeal, interest in partisan politics was noticeably waning, as it had since the high-water mark of 1968. Both these administrations were marked by no small degree of mistrust in first “Slick Willie” and then “Dubya,” as political infighting led to deadlock in Washington and a growing sense of malaise if not withdrawal among the voting public. While Clinton felt that he should hit the ground running with a goal of the Democrats since the Truman years—health care reform—he also felt that his wife, Hilary Rodham Clinton, would be a better public face of the proposed reforms. The plan backfired notoriously, and led in part to Republicans re-claiming Congress in 1994. In 1996, for the first time in recent memory, less than half of eligible voters participated in the presidential election process. By the 2000s, more Americans were more intensely interested in reality television than reality: more citizens’ votes were cast for American Idol than the American president. No small wonder, then, that the “emperor” in 2016 came from this world of reality television rather than traditional political channels. [Keep in mind that, unlike the presidential elections, one could vote multiple times for American Idol.]

The by-product of the distrust in the executive branch with the Watergate scandal led to independent prosecutors investigating President Clinton’s past. One such prosecutor, Kenneth Starr, uncovered an affair involving Clinton and a former intern. While infidelity is not a crime—and Clinton was not the first and probably not the last president guilty of such trysts—perjury and obstruction of justice are crimes, and evidence pointed to the president covering up the infidelity by encouraging others to lie under oath. When Clinton was impeached in 1998—the first president to be tried in such a fashion since “Emperor Johnson I” 130 years earlier—he revealed to a televised audience not his down-home campaign appeal but instead his scholarly legalistic background when, under oath, he asked the prosecutor to “define sex” and stated, in his most notorious case of rhetorical acrobatics, “that depends on what your definition of ‘is’ is.” While the impeachment did not result in conviction or removal from office, the scandal further demonstrated the degree to which the two major political parties were becoming more pugnacious and personal in their attacks on “the enemy.”

The 2000 election, the closest in U.S. history since 1876, ultimately came down to a decision from the U.S. Supreme Court, which denied a recount of Florida’s ballots and gave the ultimate victory to the candidate who received fewer popular votes: George W. Bush. Given the fact that voter turnout was barely over 50%, the case remained—as it had for some time—that a quarter of the citizens was openly satisfied with this result, but, uniquely in this case, one quarter of the voting population felt betrayed by a democracy and a Court (in Justice Scalia’s later words, “a committee of nine unelected lawyers”) that they felt were part of the problem, in league with “the enemy.”

Uncertainty between the Cold War and the “War on Terror”

Real enemies outside the United States remained, even after the fall of the Soviet Union. The U.S. had supported, covertly in most cases, “freedom fighters” who fought Soviet influence in the Cold War—and who now turned on the West, especially the United States. Even radical extremists inside the United States began to turn to terrorist tactics, as the deadliest pre-9/11 terrorist bombing, that on a federal building in Oklahoma City in 1995, was committed by a Persian Gulf War veteran who wanted to “take a stand” against his own federal government. The first bombing on the World Trade Center in 1993 and the attack on the U.S.S. Cole in 2000 were clear signs that “anti-American” violence was not going away in the decade-long “peace” between 1991 and 2001.

Although a former hippie activist character in the aptly-titled 1990 film Flashback predicted that “The ‘90s are going to make the ‘60s look like the ‘50s,” we should not take the opposite view: that the ‘90s were not another ‘60s, but another ‘50s. Though millennials might view this time as idyllic, it is a view just as nostalgic as the Boomers’ view of the 1950s. The power vacuum created by the Soviet Union both in the “Soviet bloc” and in the “Third World” led to plenty of international conflict, even if that conflict was felt only intermittently in the U.S. President Clinton, though wary of full-on military commitments after the Persian Gulf War, nonetheless landed ground troops to calm unrest in Somalia, Haiti, and the Balkans (twice)—in the latter case, to curb the “ethnic cleansing” of dictator Solobodan Milosevic in Bosnia and Kosovo. While the Clinton Administration was often wary of using the term “genocide” (as such a term required U.N. states’ intervention) to describe the civil wars in Rwanda and Bosnia, the latter conflict especially reminded many observers of the World War I era more than a post-Cold War “tranquility.”

The world’s first nuclear “empire” also had its work cut out for it in curtailing other “nuclear empires” from beginning in the decade(s) after the Cold War: Pakistan and India, enemies since their joint independence from Great Britain in the late 1940s, both developed nuclear weapons, turning their regional conflict into a new Cold War in South Asia. North Korea, Iran, and Iraq were all suspected of developing nuclear weapons programs, occasionally denying U.N. inspection teams permission to examine facilities. These three countries were singled out by “Emperor Bush II” in a phrase echoing World War II and Ronald Reagan both: an “axis of evil.”

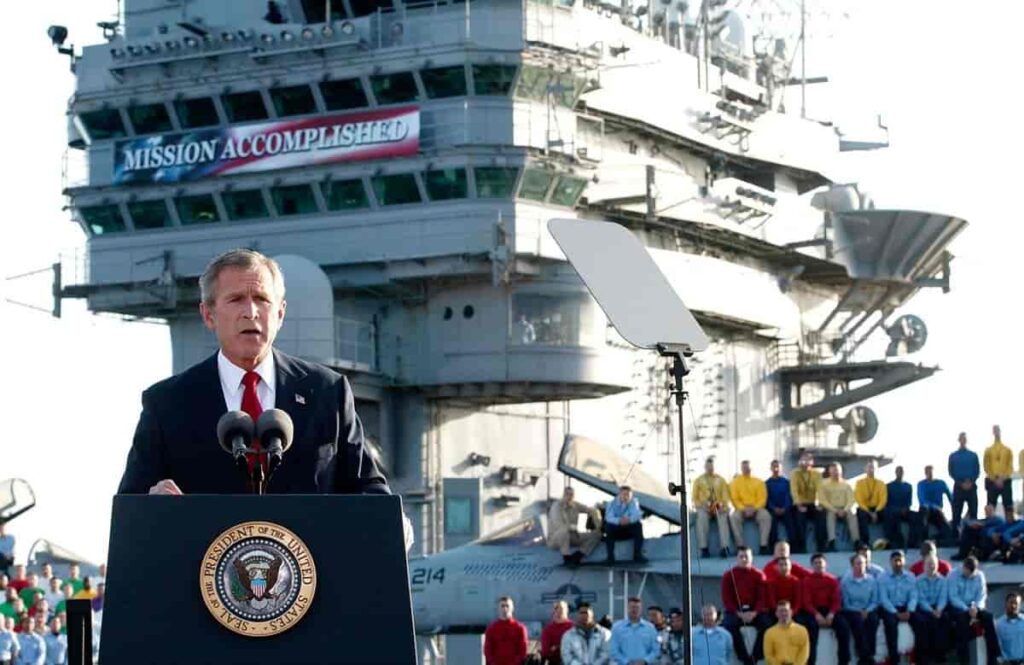

While the “War on Poverty” and “War on Drugs” seemingly ended in declaring that both poverty and drugs would “win” in some degree, Bush II declared a “War on Terror” following the deadliest terrorist attack yet: a coordinated series of plane crashes on symbols of U.S. power—the World Trade Center and the Pentagon—on the morning of Tuesday, September 11, 2001, by agents of Al Qaeda. The creation of the Department of Homeland Security and the passage of the “Patriot Act” gave the government unprecedented access to citizens’ property and personal data—with widespread congressional and citizen support. The fundamentalist-controlled government of Afghanistan received an ultimatum to support the U.S.’s hunt of Al Qaeda and its leader, Osama bin-Laden. After refusing this ultimatum, President Bush launched “Operation Enduring Freedom” into Afghanistan, followed two years later by the much-more-controversial “Operation Iraqi Freedom” after Saddam Hussein again gave U.N. inspectors the run-around in Iraq. Bush II became convinced that Hussein was holding on to “weapons of mass destruction” (WMDs)—chemical and/or nuclear weapons—that threatened American national security, even if no connections could be discovered between Al Qaeda and the notably-secular Muslim dictator.

Unlike Korea and Vietnam, the U.S. did not have widespread ally support for the action against Iraq, though limited numbers of troops did join from close allies like the U.K. In March 2003, college campuses again witnessed large-scale protests, as they had 30+ years earlier but, unlike those earlier protests, students did not strike or occupy campuses and—perhaps most significantly—there was no longer any draft that required anyone to serve in the military who did not support the conflict.

Like the Cold War, the U.S. achieved its short-term objectives—both Saddam Hussein and, eventually, Osama bin Laden, were neutralized as threats to national security, though, embarrassingly, no WMDs were ever found in Iraq. Also like the Cold War, the U.S. created power vacuums in unstable regions of the world that continue to pose problems today.

The state of the union(s) in the 21st century

After the record-level deficit of the Reagan/Bush I era, Clinton stole from the old conservative playbook and sought a balanced federal budget. In 1998—for the first time in nearly three decades—there was not a deficit, but a surplus. The debate over what to do with this surplus in the late ‘90s demonstrated that the “party wars” were not going away with the Cold War, either. While Clinton and the Democratic Party wanted to put more money into federal programs, Republicans wanted to offer tax cuts.

The economy of the late ‘90s didn’t exactly need any incentive to grow, however—the widespread commercial revolution of the Internet, the “dot com boom,” continued through the early Bush II years, until that bubble burst and a new one—real estate speculation—took its place. (The bursting of that bubble in the subprime mortgage crisis of 2007-2008 led to recession and the election of Barack Obama.) Overall, however, the U.S. had exited the Cold War as it had exited World War II—in excellent economic condition, finding itself the wealthiest nation—with the largest military—on the planet. With the development of economic communities like the North American Free Trade Agreement (NAFTA) in Clinton’s first term and the European Union (EU) coming into full force in Bush II’s first term, it seemed like the boom years for the industrialized world were here to stay with unprecedented international economic cooperation.

However, an alarming trend became apparent on the 2000 census: unlike other industrialized nations, the gap between the wealthiest and poorest U.S. citizens was only growing with time, and a greater proportion of total wealth was held by the top 5% of citizens. Furthermore, economic and academic success was still statistically linked to race. After Hurricane Katrina left many poorer citizens of New Orleans homeless in 2005 and the Federal Emergency Management Agency—which had done well to provide for the families of the victims of Oklahoma City ten years earlier—failed to improve short-term basic living conditions, critics of Bush II (like critics of his father and of Reagan) accused him of being racist. (This inspired the crass words of none other than the rightful owner of this Website Kanye West: “George Bush doesn’t care about black people… [awkward pause].”) With statistical white advantage, assisting wealthier Americans with tax cuts might seem, especially to liberal media outlets, to be assisting white people over minorities.

Bush II, like most every “emperor” before him, looked to education to offer equality of opportunity to all students, regardless of race or class. With the bipartisan cooperation of the post-9/11 era, Bush II signed the No Child Left Behind Act into law in 2002, which held all schools across the country to the same levels of performance via standardized tests, and assigned consequences (meted out by state governments) for schools that did not meet those standards consistently. The result was widely criticized for increasing “teach to the test” strategies of education and for lack of federal oversight, leaving the enforcement to the states in the federalist spirit of the Republican platform. The rise of enrollment in charter schools, magnet schools, and private schools generally is an indicator of the limited successes of the Act, the main tenets of which have since been abandoned.

Lack of faith in public institutions, including public education, is a sign of larger trends in 20th and 21st century American life that continue into the present, and will likely continue well into the future. It’s noteworthy that the most recent amendment—the 27th (passed in 1992)—was proposed to be part of the original Bill of Rights by James Madison, and is perhaps the most cynical of the lot: the rule that Congress cannot make rules that affect representatives’ salaries until an election can first intervene. It’s as if we expect our leaders in public and private life to be self-serving. The private lives of our own “Caesars,” like the Romans’, continues to preoccupy us. While the Griswold decision established a right to privacy, with the ubiquity of social media one must ask, in the parlance of our times, “Is privacy still a thing?” The culture wars have turned private, with attacks and defenses conducted on social media platforms and, as a result, the voice of young people in particular has become less and less publicly exhibited. The campaigns of the next “emperors” were ever more reliant on posts, tweets, and Internet ads. How will the “emperors” of your generation rise to the “emperor” seat? What technological revolutions await, and will half of the American populace once again ignore the process?